I created this issue as a proposition to move away from using C3P0 to HikariCP. HikariCP is a newer Connection Pooling Library and a much better one than C3P0. I have been working on adding HikariCP support to a Clojure based ORM, and have found HikariCP code relatively better than C3P0 by miles. I don’t mean to say HikariCP is good code, but I have never come across good code in a Connection Pooling Library. But C3P0 is just a huge codebase and a pretty bad one at that. HikariCP is relatively better and smaller.

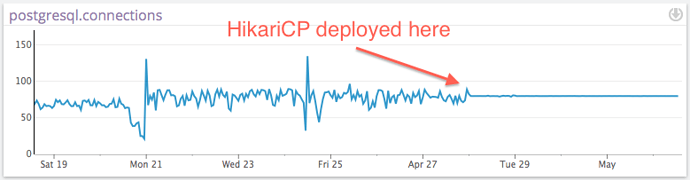

Advantages of using HikariCP: Significant performance benefits Better and smaller codebase, makes it easier to understand. Staying up to date with whats new, rather than legacy Being developed more actively than C3P0 (but both of them have concentrated contributors, rather than distributed) https://github.com/brettwooldridge/HikariCP/wiki/Pool-Analysis http://brettwooldridge.github.io/HikariCP/

Disadvantages: If any of the modules rather than going using OpenMRS or Hibernate’s Session Factory abstractions use C3P0’s constructs, then their module is going to break. Other regular disadvantages of moving to a new core library in an Open Source codebase with a lot of contributors like shared knowledge/understanding etc.

Wanted to know the community’s thoughts on this proposal.

: “You should get this new car.”

: “You should get this new car.” : “My car is running fine, never had a minute’s trouble, why should I replace it?”

: “My car is running fine, never had a minute’s trouble, why should I replace it?” then we may decide that HikariCP is a better out-of-the-box connection pooling solution relative to c3p0 and make it our default.

then we may decide that HikariCP is a better out-of-the-box connection pooling solution relative to c3p0 and make it our default.