Hi all,

This is going to be a somewhat long post. Please bear with me, as I think it’s an important discussion of the future, etc.

Vision

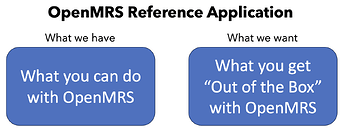

One of the biggest changes between the O2 and O3 Reference Applications is the philosophy with which we’ve tried to build them. The O2 Reference Application is a minimal EMR, meaning that it provides a good amount of core EMR functionality, but it was fundamentally an example (a “reference”) for how the various components that make up O2 could be combined to make a functioning app. It was anticipated that implementations would take that skeleton and re-work it for their needs. Most prominently, what this meant is that there wasn’t always a clear way to build on top of the reference application without forking the repo and manually synchronizing dependencies.

With O3, we’re aiming to have the reference application be more of a “product” than a “reference”, which is to say that we want to enable implementations to build on top of the core of the Reference Application. This is going to be a bit of a process as we figure out the optimal way to do this. To be clear, we still expect that implementations will need to maintain their own distribution repository for the implementation, but the goal is to make it so that this repository can primarily be about managing implementation-specific customizations.

Concretely, we want this implementation to be incremental. An overall goal is to still be able to have the build determined by a single file (distro.properties) that produces the necessary artifacts via the SDK’s build-distro command. There are a few steps to get to this point with O3, but this is still fundamentally how we’re building the backend in the Reference Application.

Frontend

One of the biggest missing pieces in the O3 frontend is something like the plug-and-play nature of OpenMRS’s backend module system. To load a backend module in OpenMRS, you just need to ensure that the OMOD is in the system module directory and it’s automatically loaded for you. Doing this on the frontend is a little more challenging because the O3 frontend is delivered as a set of static files without a server-side component.[1]

Up until now, however, this tooling has required implementations to completely specify all apps and dependencies they want to include, using a file similar to the spa-assemble-config.json in the Reference Application. This becomes a bit of a pain for implementations because there’s no obvious way to take the reference application configuration and add or remove modules from it, which basically requires implementations to monitor our published versions and update their spa-assemble-config.json files when they want to “upgrade to a new version of the Reference Application”.

We now have (for the latest development builds and the next up-coming beta), what’s hopefully a more workable solution around this. Specifically this solution has two components:

- When the frontend is built, it now creates a zip file containing the exact versions of both core and the frontend modules used to build that version of the reference application. This is published to our Maven repository[2] as

org.openmrs.distro:referenceapplication-frontend:3.0.0-SNAPSHOT. This zip file has a single file in it, calledspa-assemble-config.jsonthat is consumable by the NPM CLIopenmrstool. - We’ve added to the

openmrs assemblecommand two features that enable implementations to build on top of the publishedspa-assemble-config.json[3]. First, it’s now possible to specify multiple configuration files on the command line for the assemble command. The tooling will merge these files, allowing new custom modules to be provided. Second, theassembleconfiguration file now allows specifying afrontendModuleExcludesproperty, which is an array of the names of modules to exclude, which allows an implementation to remove applications included in the Reference Application.

Docker

We’ve been pushing pretty hard on the community to use Docker / OCI containers as a deployment mechanism. Our frontend image is designed to be re-usable in deployments. All that is necessary is to replace the contents in /usr/share/nginx/html/ on the image with whatever static frontend files you want to serve. For example, you could use a docker-compose descriptor like:

# ... snip ...

frontend:

image: openmrs/openmrs-reference-application-3-frontend:3.0.0-beta.16

restart: "unless-stopped"

environment:

SPA_PATH: /openmrs/spa

API_URL: /openmrs

SPA_CONFIG_URLS:

SPA_DEFAULT_LOCALE:

healthcheck:

test: ["CMD", "curl", "-f", "http://localhost/"]

timeout: 5s

depends_on:

- backend

volumes:

- './frontend:/usr/share/nginx/html/'

Assuming that the local ./frontend directory contained the built frontend files. Alternatively, if you wanted to publish your own Docker container you could use something like this example Dockerfile:

FROM openmrs/openmrs-reference-application-3-frontend:3.0.0-beta.16

RUN rm -rf /usr/share/nginx/html/*

COPY ./frontend /usr/share/nginx/html/

Which does basically the same thing.

Future Steps

Currently, leveraging all of this is a little clunky. Hopefully over time, we’ll be able to iron things out with some work in the SDK to more easily support these new features and to add something similar for backend configurations. Specifically:

distro.propertiesshould support specifying the frontend artifact version and use that as a base when building the frontend.- The SDK should generate Docker images built on our newer Docker images rather than the old versions that are being used.

- We should add extend the

build-distrocommand with capabilities to override an existing distribution similar to the one’s described here.

It’s also unclear if there’s a straight-forward modular frontend deployment package we could depend on. The existing solutions I’m aware of either: (1) like Piral’s feeds or single-spa’s import-map-deployer rely on a long-running backend service that serves as the “module registry” or (2) like Baseplate and Piral’s default setup, require a hosted CDN instance, which aren’t practical.

The solution that most closely resembles OpenMRS’s backend module system would be to have a server-side instance that parses through a set of modules and builds the necessary routes.registry.json and importmap.json files. The necessary metadata is basically already in the package NPM files. I attempted something like that here, albeit without a full server-side component, since a server-side component. The main issue with this approach is that it is hard to make it work on machines without access to the internet. ↩︎

If you’re a frontend developer, publishing the record of the frontend artifacts used to Maven may look like a funny decision. Ultimately, our SDK is based on Maven and most existing distribution builds are heavily dependent on Maven, so this seemed like the option that would cause the least friction. If there’s an ask for it, we could also publish something to the NPM registry, though. ↩︎

Currently the NPM tooling is not capable of working directly with the zip file. The expectation is that another process downloads and consumes the file. ↩︎