I am currently working on the task “Resolving random test failures on GitHub” mentioned in this thread. I will be using this thread to give my updates and have the discussions related to the task. At the moment I have identified 2 failing tests.

RefApp 2.x Visit Note test:

- This test passes correctly when ran locally.

- However when running with github actions, it fails randomly at the “Save visit Note” step.

- I’ll be further looking into this.

OCL Subscription Module Test:

The OCL Subscription Module Test was failing due to an incorrect cypress configuration (baseUrl) and a security measure (OWASP csrfguard). Even after fixing the configuration, The test was unable to access the login page because the csrfguard script was preventing access from an unauthorized domain.

The csrfguard script validates the domain by comparing the value of document.domain with qa-refapp.openmrs.org. In normal browsers, this works well because document.domain returns qa-refapp.openmrs.org. But in cypress, document.domain returns only openmrs.org, ignoring the subdomain.

According to this comment which is on a similar issue, the cypress team have configured that behaviour intentionally to avoid some issues with subdomains. As a solution, they suggest turning off the OWASP CSRFGuard restrictions during testing, but I think it might raise security concerns so it won’t be possible for us.

According to my findings, there’s no such issue in playwright. So my suggestion is to migrate this to playwrite because it’s the only remaining cypress test in the contib-qa-framework. What do you think @jayasanka @kdaud ?

Thanks for the update @piumal1999 !

I agree with you, the straightforward solution would be to use playwright and move it to the relevant repository. We can add it to our priority list. Currently, we are running tests against a docker container at GitHub actions. Therefore, this issue shouldn’t present itself in that environment. For the time being, let’s address it by configuring Cypress correctly. Afterwards, we can add the migration task to our to-do list. How does that sound to you?

Thanks @piumal1999 for taking a look at this test, i am having the same issue on the Location Management workflow that i am working on because it passes locally but fails on github actions.

I will be looking forward to your fix ![]()

Which server are you using for the local plans?

@mherman22 have put some reviews on your PR that need to be caught.

@jayasanka Yeah. That makes sense. I’ll create a separate issue for the migration. For now, I’ll try the docker method. Thanks for the response.

I used the Login server. The GitHub workflow is running against a docker deployment. So I’ll try it locally with a docker container as well.

There’s nothing wrong with the GitHub workflow and the server. The error is due to some incorrect assertions and the order of the steps. The tests passed only when the server is not fast enough to load the pages quickly. I’ll fix those and send a pull request.

Updates:

-

Found a similar bug in 2.x Clinical Visit Test. Created a ticket for that.

-

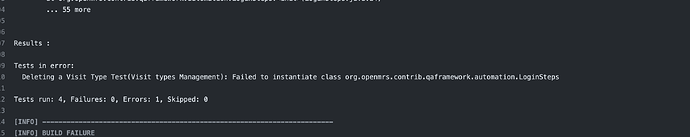

The next type of issue i identified is:

These type of issues occur randomly in most of the tests when running. According to the error log, the reason for this is a browser start-up failure. I couldn’t find the exact root cause yet.

-

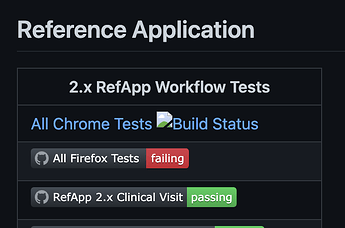

By the way, why don’t we have a workflow for this

All Chrome Testsstatus badge? If we are not using it anymore, shall I create a ticket to remove the status badge?

cc: @jayasanka @kdaud

AFAIK such issues may be caused by the server as a result of connectivity when running builds locally using an online instance. What actually happens is that the server may take longer to load the page components(elements) which causes some steps in the selenium tests to fail randomly.

Possible solution:

-

You need to have a faster connection when running local builds using an online instance(demo or qarefapp) or alternatively you can use a local server though you need to setUp the latest snapshot of RefApp that contains the latest changes(the SDK provides it during the setUp process).

-

You need to re-run the tests that may fail randomly and confirm. If the error is repeatedly reported then it’s worth investigating the test(s).

-

Some times the issue may be caused by the pc whose memory needs to be well managed so enough resources can be allocated to different tasks.

I have done a manual run on RefApp Clinical Visit Test via github actions and the build is happy.

All Chrome Tests badge is tracking the ci latest build status for qaframework module. On our ci, these automated tests are run on chrome engine using qa-refapp server that contains the latest changes of RefApp.

@piumal1999 do you think you can investigate why the badge is failing to retrieve the ci build status?

/c: @sharif

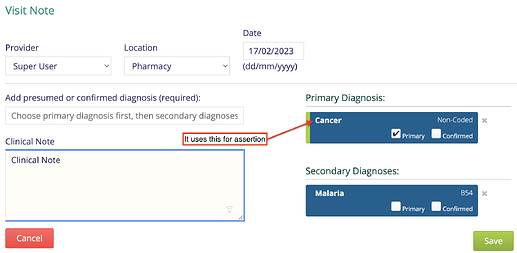

This usually occurs when running the All Firefox Tests workflow which contains all the tests.

This might be the reason.

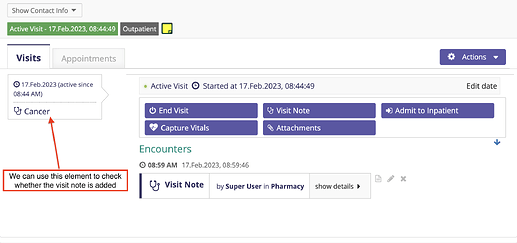

In that workflow, we have a test for visit notes. It adds a primary diagnosis and a secondary diagnosis to the visit note, and saves it and then check for the saved note.

In the way that this test is written, After saving the note, it checks the saved note by using the following element. If the server is slow enough, it can quickly check for the element before it redirects to the visits page and pass the test.

Instead of that, we can use the following elements for the assertion.

Sure. I’ll have a look.

@piumal1999 are you able to share a PR to have a look on how the test would look like?

It will be similar to this PR. Since @chiran has already assigned himself to the ticket, I think he can continue working on it by referring to that PR.

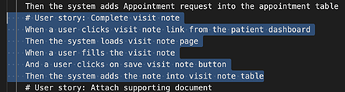

The updated user story would look like this:

# User story: Complete visit note

When a user clicks visit note link from the patient dashboard

Then the system loads visit note page

When a user fills the visit note

Then the system displays the diagnosis cards

When the user clicks on save visit note button

Then the system adds the note into visit note table

The bamboo workflow (CONTRIB-QA) has been disabled due to poor performance (check this slack thread). It would be better if we could eliminate the CONTRIB-QA plan and integrate the 2.x E2E tests into the 2.x platform and refapp plans.