Hello @dkayiwa,

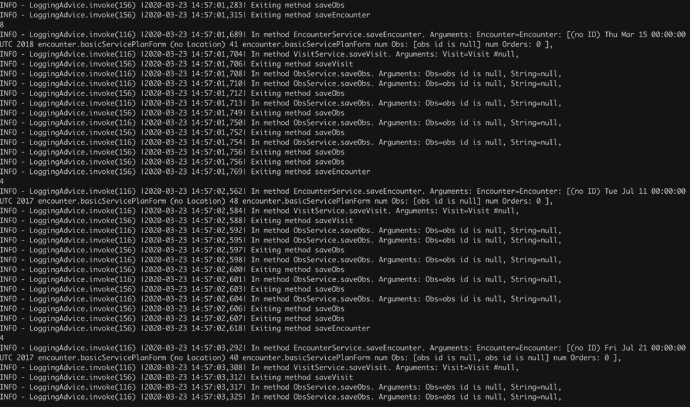

This is how the logs are if I pick logs for one of the encounters while the tool is posting encounters:

INFO - LoggingAdvice.invoke(116) |2020-03-23 14:57:01,227| In method EncounterService.saveEncounter. Arguments: Encounter=Encounter: [(no ID) Tue Jul 30 00:00:00 UTC 2019 encounter.basicServicePlanForm (no Location) 39 encounter.basicServicePlanForm num Obs: [obs id is null, obs id is null] num Orders: 0 ],

INFO - LoggingAdvice.invoke(116) |2020-03-23 14:57:01,238| In method VisitService.saveVisit. Arguments: Visit=Visit #null,

INFO - LoggingAdvice.invoke(156) |2020-03-23 14:57:01,241| Exiting method saveVisit

INFO - LoggingAdvice.invoke(116) |2020-03-23 14:57:01,247| In method ObsService.saveObs. Arguments: Obs=obs id is null, String=null,

INFO - LoggingAdvice.invoke(116) |2020-03-23 14:57:01,250| In method ObsService.saveObs. Arguments: Obs=obs id is null, String=null,

INFO - LoggingAdvice.invoke(156) |2020-03-23 14:57:01,253| Exiting method saveObs

INFO - LoggingAdvice.invoke(116) |2020-03-23 14:57:01,254| In method ObsService.saveObs. Arguments: Obs=obs id is null, String=null,

INFO - LoggingAdvice.invoke(156) |2020-03-23 14:57:01,256| Exiting method saveObs

INFO - LoggingAdvice.invoke(116) |2020-03-23 14:57:01,257| In method ObsService.saveObs. Arguments: Obs=obs id is null, String=null,

INFO - LoggingAdvice.invoke(156) |2020-03-23 14:57:01,259| Exiting method saveObs

INFO - LoggingAdvice.invoke(116) |2020-03-23 14:57:01,260| In method ObsService.saveObs. Arguments: Obs=obs id is null, String=null,

INFO - LoggingAdvice.invoke(156) |2020-03-23 14:57:01,262| Exiting method saveObs

INFO - LoggingAdvice.invoke(156) |2020-03-23 14:57:01,263| Exiting method saveObs

INFO - LoggingAdvice.invoke(116) |2020-03-23 14:57:01,265| In method ObsService.saveObs. Arguments: Obs=obs id is null, String=null,

INFO - LoggingAdvice.invoke(116) |2020-03-23 14:57:01,268| In method ObsService.saveObs. Arguments: Obs=obs id is null, String=null,

INFO - LoggingAdvice.invoke(156) |2020-03-23 14:57:01,270| Exiting method saveObs

INFO - LoggingAdvice.invoke(116) |2020-03-23 14:57:01,272| In method ObsService.saveObs. Arguments: Obs=obs id is null, String=null,

INFO - LoggingAdvice.invoke(156) |2020-03-23 14:57:01,274| Exiting method saveObs

INFO - LoggingAdvice.invoke(116) |2020-03-23 14:57:01,276| In method ObsService.saveObs. Arguments: Obs=obs id is null, String=null,

INFO - LoggingAdvice.invoke(156) |2020-03-23 14:57:01,279| Exiting method saveObs

INFO - LoggingAdvice.invoke(116) |2020-03-23 14:57:01,280| In method ObsService.saveObs. Arguments: Obs=obs id is null, String=null,

INFO - LoggingAdvice.invoke(156) |2020-03-23 14:57:01,282| Exiting method saveObs

INFO - LoggingAdvice.invoke(156) |2020-03-23 14:57:01,283| Exiting method saveObs

INFO - LoggingAdvice.invoke(156) |2020-03-23 14:57:01,315| Exiting method saveEncounter

8

The above is one chunk on my console, where all lines barring last one looks to be related to saveEnc/saveObs and the last one is from my Listener. Since it is a direct sysout it is just printing the number.